Adrian Currie, Research Associate at the Centre for the Study of Existential Risk (CSER), is looking at the relationship between the culture of science, and our capacity to understand, predict and mitigate low probability, high impact events. His work is supported by the Templeton World Charity Foundation as part of the Managing Extreme Technological Risk project.

Q. Adrian, you’ve been a Research Associate at CSER, CRASSH for two years. What do you consider the highlight of your time here at the Centre?

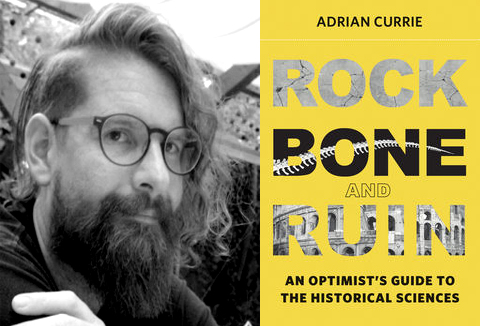

I don’t think I can settle on a single highlight: the two years were incredibly rich with opportunities to engage in interdisciplinary research with brilliant people on important topics. I’m particularly proud of editing a special issue in Futures on Existential and Catastrophic Risk, of helping put together an episode of the Naked Scientists on Existential Risk and Mavericks in science and of publishing, with a bunch of collaborators, a paper arguing for a new approach to comparative cognitive work. Oh, I published a book too. That was exciting.

Q. During your research, did you stumble across something unexpected that you’d like to share with us?

The thing I was most concerned about going into the job was how interdisciplinary the project is. CSER is a space where philosophers, scientists, policy and legal experts, and others attempt to both do cutting edge academic work and meaningfully engage with non-academics, and real engagement across disciplines is often extremely difficult. I have been gratified to see that, with the right organization and people, there is plenty of space for such work.

Q. You will soon be leaving the Centre. Where to next?

I’ve taken up a lectureship at the Department of Sociology, Philosophy and Anthropology at Exeter University. I’ll still be connected with Cambridge though: this coming academic year Marta Halina and I will be examining notions of creativity across the sciences with the Leverhulme Centre for the Future of Intelligence based on a TWCF grant.

- Learn more about the CRASSH community.

- Read about the Centre for the Study of Existential Risk.

- You may also like to read Rock, Bone & Ruin: 5 Questions to Adrian Currie.