About the Author: Lord Martin Rees is a fellow of Trinity College and emeritus professor of Cosmology and Astrophysics at the University of Cambridge. He is the Astronomer Royal and a foreign associate of the US National Academy of Sciences, the American Philosophical Society, and the American Academy of Arts and Sciences. He is the author of more than 500 research papers and eight books, and co-founder of the Centre for the Study of Existential Risk at CRASSH.

Albert Einstein said that the ‘most incomprehensible thing about the Universe is that it is comprehensible’. He was right to be astonished. Human brains evolved to be adaptable, but our underlying neural architecture has barely changed since our ancestors roamed the savannah and coped with the challenges that life on it presented. It’s surely remarkable that these brains have allowed us to make sense of the quantum and the cosmos, notions far removed from the ‘commonsense’, everyday world in which we evolved.

But I think science will hit the buffers at some point. There are two reasons why this might happen. The optimistic one is that we clean up and codify certain areas (such as atomic physics) to the point that there’s no more to say. A second, more worrying possibility is that we’ll reach the limits of what our brains can grasp. There might be concepts, crucial to a full understanding of physical reality, that we aren’t aware of, any more than a monkey comprehends Darwinism or meteorology. Some insights might have to await a post-human intelligence.

Scientific knowledge is actually surprisingly ‘patchy’ – and the deepest mysteries often lie close by. Today, we can convincingly interpret measurements that reveal two black holes crashing together more than a billion light years from Earth. Meanwhile, we’ve made little progress in treating the common cold, despite great leaps forward in epidemiology. The fact that we can be confident of arcane and remote cosmic phenomena, and flummoxed by everyday things, isn’t really as paradoxical as it looks. Astronomy is far simpler than the biological and human sciences. Black holes, although they seem exotic to us, are among the uncomplicated entities in nature. They can be described exactly by simple equations.

So how do we define complexity? The question of how far science can go partly depends on the answer. Something made of only a few atoms can’t be very complicated. Big things need not be complicated either. Despite its vastness, a star is fairly simple – its core is so hot that complex molecules get torn apart and no chemicals can exist, so what’s left is basically an amorphous gas of atomic nuclei and electrons. Alternatively, consider a salt crystal, made up of sodium and chlorine atoms, packed together over and over again to make a repeating cubical lattice. If you take a big crystal and chop it up, there’s little change in structure until it breaks down to the scale of single atoms. Even if it’s huge, a block of salt couldn’t be called complex.

Atoms and astronomical phenomena – the very small and the very large – can be quite basic. It’s everything in between that gets tricky. Most complex of all are living things. An animal has internal structure on every scale, from the proteins in single cells right up to limbs and major organs. It doesn’t exist if it is chopped up, the way a salt crystal continues to exist when it is sliced and diced. It dies.

Scientific understanding is sometimes envisaged as a hierarchy, ordered like the floors of a building. Those dealing with more complex systems are higher up, while the simpler ones go down below. Mathematics is in the basement, followed by particle physics, then the rest of physics, then chemistry, then biology, then botany and zoology, and finally the behavioural and social sciences (with the economists, no doubt, claiming the penthouse).

‘Ordering’ the sciences is uncontroversial, but it’s questionable whether the ‘ground-floor sciences’ – particle physics, in particular – are really deeper or more all-embracing than the others. In one sense, they clearly are. As the physicist Steven Weinberg explains in Dreams of a Final Theory (1992), all the explanatory arrows point downward. If, like a stubborn toddler, you keep asking ‘Why, why, why?’, you end up at the particle level. Scientists are nearly all reductionists in Weinberg’s sense. They feel confident that everything, however complex, is a solution to Schrödinger’s equation – the basic equation that governs how a system behaves, according to quantum theory.

But a reductionist explanation isn’t always the best or most useful one. ‘More is different,’ as the physicist Philip Anderson said. Everything, no matter how intricate – tropical forests, hurricanes, human societies – is made of atoms, and obeys the laws of quantum physics. But even if those equations could be solved for immense aggregates of atoms, they wouldn’t offer the enlightenment that scientists seek.

Macroscopic systems that contain huge numbers of particles manifest ‘emergent’ properties that are best understood in terms of new, irreducible concepts appropriate to the level of the system. Valency, gastrulation (when cells begin to differentiate in embryonic development), imprinting, and natural selection are all examples. Even a phenomenon as unmysterious as the flow of water in pipes or rivers is better understood in terms of viscosity and turbulence, rather than atom-by-atom interactions. Specialists in fluid mechanics don’t care that water is made up of H2O molecules; they can understand how waves break and what makes a stream turn choppy only because they envisage liquid as a continuum.

New concepts are particularly crucial to our understanding of really complicated things – for instance, migrating birds or human brains. The brain is an assemblage of cells; a painting is an assemblage of chemical pigment. But what’s important and interesting is how the pattern and structure appears as we go up the layers, what can be called emergent complexity.

So reductionism is true in a sense. But it’s seldom true in a useful sense. Only about 1 per cent of scientists are particle physicists or cosmologists. The other 99 per cent work on ‘higher’ levels of the hierarchy. They’re held up by the complexity of their subject, not by any deficiencies in our understanding of subnuclear physics.

In reality, then, the analogy between science and a building is really quite a poor one. A building’s structure is imperilled by weak foundations. By contrast, the ‘higher-level’ sciences dealing with complex systems aren’t vulnerable to an insecure base. Each layer of science has its own distinct explanations. Phenomena with different levels of complexity must be understood in terms of different, irreducible concepts.

We can expect huge advances on three frontiers: the very small, the very large, and the very complex. Nonetheless – and I’m sticking my neck out here – my hunch is there’s a limit to what we can understand. Efforts to understand very complex systems, such as our own brains, might well be the first to hit such limits. Perhaps complex aggregates of atoms, whether brains or electronic machines, can never know all there is to know about themselves. And we might encounter another barrier if we try to follow Weinberg’s arrows further down: if this leads to the kind of multi-dimensional geometry that string theorists envisage. Physicists might never understand the bedrock nature of space and time because the mathematics is just too hard.

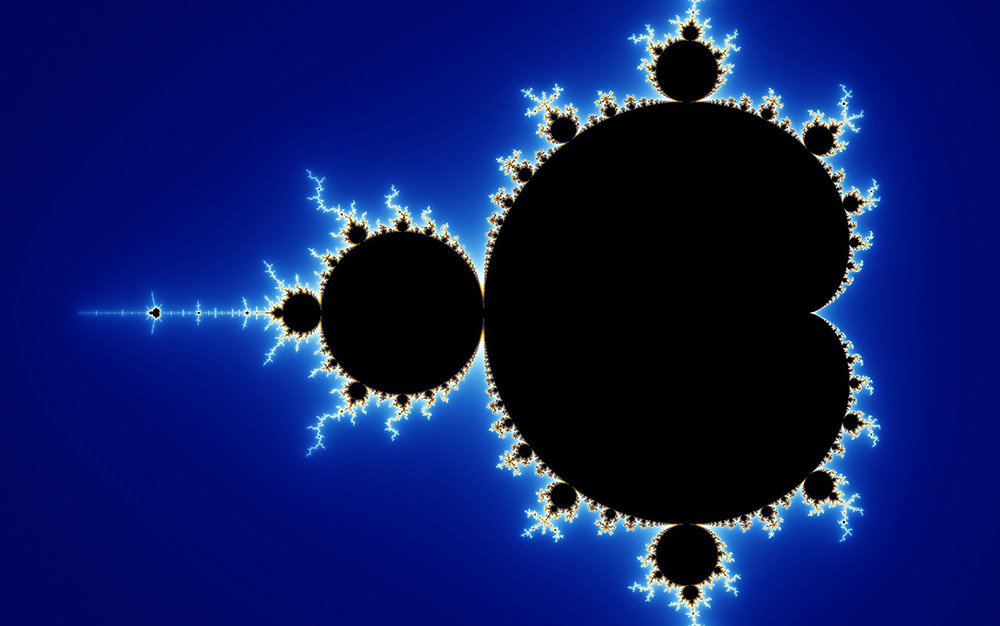

My claim that there are limits to human understanding has been challenged by David Deutsch, a distinguished theoretical physicist who pioneered the concept of ‘quantum computing’. In his provocative and excellent book The Beginning of Infinity (2011), he says that any process is computable, in principle. That’s true. However, being able to compute something is not the same as having an insightful comprehension of it. The beautiful fractal pattern known as the Mandelbrot set is described by an algorithm that can be written in a few lines. Its shape can be plotted even by a modest-powered computer:

Mandelbrot set. Courtesy Wikipedia.

But no human who was just given the algorithm can visualise this immensely complicated pattern in the same sense that they can visualise a square or a circle.

The chess-champion Garry Kasparov argues in Deep Thinking (2017) that ‘human plus machine’ is more powerful than either alone. Perhaps it’s by exploiting the strengthening symbiosis between the two that new discoveries will be made. For example, it will become progressively more advantageous to use computer simulations rather than run experiments in drug development and material science. Whether the machines will eventually surpass us to a qualitative degree – and even themselves become conscious – is a live controversy.

Abstract thinking by biological brains has underpinned the emergence of all culture and science. But this activity, spanning tens of millennia at most, will probably be a brief precursor to the more powerful intellects of the post-human era – evolved not by Darwinian selection but via ‘intelligent design’. Whether the long-range future lies with organic post-humans or with electronic super-intelligent machines is a matter for debate. But we would be unduly anthropocentric to believe that a full understanding of physical reality is within humanity’s grasp, and that no enigmas will remain to challenge our remote descendants.![]()

Author: Lord Martin Rees

• This article was originally published at Aeon and has been republished under Creative Commons.